Instructor-Led Training Parameters

Course Highlights

- Instructor-led Online Training

- Project Based Learning

- Certified & Experienced Trainers

- Course Completion Certificate

- Lifetime e-Learning Access

- 24x7 After Training Support

Big Data and Hadoop Training Course Overview

Big Data and Hadoop training is essential to understand the power of Big Data. The training introduces about Hadoop, MapReduce, and Hadoop Distributed File system (HDFS). It will drive you through the process of developing distributed processing of large data sets across clusters of computers and administering Hadoop. The participants will learn how to handle heterogeneous data coming from different sources. This data may be structured, unstructured, communication records, log files, audio files, pictures, and videos.

With this comprehensive training, you’ll learn the following:

- How Hadoop fits into the real world

- Role of Relational Database Management System (RDBMS) and Grid computing

- Concepts of MapReduce and HDFS

- Using Hadoop I/O to write MapReduce programs

- Develop MapReduce applications to solve the problems

- Set up Hadoop cluster and administer

- Pig for creating MapReduce programs

- Hive, a data warehouse software, for querying and managing large datasets residing in distributed storage

- Hbase implementation, installation, and services

- Insatllation and group membership in ZooKeeper

- Use of Sqoop in controlling the import and consistency

- Data architects

- Data integration architects

- Data scientist

- Data analyst

- Decision makers

- Hadoop administrators and developers

The candidates with basic understanding of computers, SQL, and elementary programing skills in Python are ideal for this training.

Instructor-led Training Live Online Classes

Suitable batches for you

| May, 2026 | Weekdays | Mon-Fri | Enquire Now |

| Weekend | Sat-Sun | Enquire Now | |

| Jun, 2026 | Weekdays | Mon-Fri | Enquire Now |

| Weekend | Sat-Sun | Enquire Now |

Big Data and Hadoop Training Course Content

Meet Hadoop

- Data!

- Data Storage and Analysis

- Comparison with Other Systems

- RDBMS

- Grid Computing

- Volunteer Computing

- A Brief History of Hadoop

- Apache Hadoop and the Hadoop Ecosystem

- Hadoop Releases.

MapReduce

- A Weather Dataset

- Data Format

- Analyzing the Data with Unix Tools

- Analyzing the Data with Hadoop

- Map and Reduce

- Java MapReduce

- Scaling Out

- Data Flow

- Combiner Functions

- Running a Distributed MapReduce Job

- Hadoop Streaming

- Compiling and Running

The Hadoop Distributed Filesystem

- The Design of HDFS

- HDFS Concepts

- Blocks

- Namenodes and Datanodes

- HDFS Federation

- HDFS High-Availability

- The Command-Line Interface

- Basic Filesystem Operations

- Hadoop Filesystems

- Interfaces

- The Java Interface

- Reading Data from a Hadoop URL

- Reading Data Using the FileSystem API

- Writing Data

- Directories

- Querying the Filesystem

- Deleting Data

- Data Flow

- Anatomy of a File Read

- Anatomy of a File Write

- Coherency Model

- Parallel Copying with distcp

- Keeping an HDFS Cluster Balanced

- Hadoop Archives

Hadoop I/O

- Data Integrity

- Data Integrity in HDFS

- LocalFileSystem

- ChecksumFileSystem

- Compression

- Codecs

- Compression and Input Splits

- Using Compression in MapReduce

- Serialization

- The Writable Interface

- Writable Classes

- File-Based Data Structures

- SequenceFile

- MapFile

Developing a MapReduce Application

- The Configuration API

- Combining Resources

- Variable Expansion

- Configuring the Development Environment

- Managing Configuration

- GenericOptionsParser, Tool, and ToolRunner

- Writing a Unit Test

- Mapper

- Reducer

- Running Locally on Test Data

- Running a Job in a Local Job Runner

- Testing the Driver

- Running on a Cluster

- Packaging

- Launching a Job

- The MapReduce Web UI

- Retrieving the Results

- Debugging a Job

- Hadoop Logs

- Tuning a Job

- Profiling Tasks

- MapReduce Workflows

- Decomposing a Problem into MapReduce Jobs

- JobControl

How MapReduce Works

- Anatomy of a MapReduce Job Run

- Classic MapReduce (MapReduce 1)

- Failures

- Failures in Classic MapReduce

- Failures in YARN

- Job Scheduling

- The Capacity Scheduler

- Shuffle and Sort

- The Map Side

- The Reduce Side

- Configuration Tuning

- Task Execution

- The Task Execution Environment

- Speculative Execution

- Output Committers

- Task JVM Reuse

- Skipping Bad Records

MapReduce Types and Formats

- MapReduce Types

- The Default MapReduce Job

- Input Formats

- Input Splits and Records

- Text Input

- Binary Input

- Multiple Inputs

- Database Input (and Output)

- Output Formats

- Text Output

- Binary Output

- Multiple Outputs

- Lazy Output

- Database Output

MapReduce Features

- Counters

- Built-in Counters

- User-Defined Java Counters

- User-Defined Streaming Counters

- Sorting

- Preparation

- Partial Sort

- Total Sort

- Secondary Sort

- Joins

- Map-Side Joins

- Reduce-Side Joins

- Side Data Distribution

- Using the Job Configuration

- Distributed Cache

- MapReduce Library Classes

Setting Up a Hadoop Cluster

- Cluster Specification

- Network Topology

- Cluster Setup and Installation

- Installing Java

- Creating a Hadoop User

- Installing Hadoop

- Testing the Installation

- SSH Configuration

- Hadoop Configuration

- Configuration Management

- Environment Settings

- Important Hadoop Daemon Properties

- Hadoop Daemon Addresses and Ports

- Other Hadoop Properties

- User Account Creation

- YARN Configuration

- Important YARN Daemon Properties

- YARN Daemon Addresses and Ports

- Security

- Kerberos and Hadoop

- Delegation Tokens

- Other Security Enhancements

- Benchmarking a Hadoop Cluster

- Hadoop Benchmarks

- User Jobs

- Hadoop in the Cloud

- Hadoop on Amazon EC2

Administering Hadoo

- HDFS

- Persistent Data Structures

- Safe Mode

- Audit Logging

- Tools

- Monitoring

- Logging

- Metrics

- Java Management Extensions

- Routine Administration Procedures

- Commissioning and Decommissioning Nodes

- Upgrades

Pig

- Installing and Running Pig

- Execution Types

- Running Pig Programs

- Grunt

- Pig Latin Editors

- An Example

- Generating Examples

- Comparison with Databases

- Pig Latin

- Structure

- Statements

- Expressions

- Types

- Schemas

- Functions

- Macros

- User-Defined Functions

- A Filter UDF

- An Eval UDF

- A Load UDF

- Data Processing Operators

- Loading and Storing Data

- Filtering Data

- Grouping and Joining Data

- Sorting Data

- Combining and Splitting Data

- Pig in Practice

- Parallelism

- Parameter Substitution

Hive

- Installing Hive

- The Hive Shell

- An Example

- Running Hive

- Configuring Hive

- Hive Services

- Comparison with Traditional Databases

- Schema on Read Versus Schema on Write

- Updates, Transactions, and Indexes

- HiveQL

- Data Types

- Operators and Functions

- Tables

- Managed Tables and External Tables

- Partitions and Buckets

- Storage Formats

- Importing Data

- Altering Tables

- Dropping Tables

- Querying Data

- Sorting and Aggregating

- MapReduce Scripts

- Joins

- Subqueries

- Views

- User-Defined Functions

- Writing a UDF

- Writing a UDAF

Hbase

- Hbasics

- Backdrop

- Concepts

- Whirlwind Tour of the Data Model

- Implementation

- Installation

- Test Drive

- Clients

- Java

- Avro, REST, and Thrift

- Schemas

- Loading Data

- Web Queries

- HBase Versus RDBMS

- Successful Service

- Hbase

ZooKeeper

- Installing and Running ZooKeeper

- Group Membership in ZooKeeper

- Creating the Group

- Joining a Group

- Listing Members in a Group

- Deleting a Group

- The ZooKeeper Service

- Data Model

- Operations

- Implementation

- Consistency

- Sessions

- States

Sqoop

- Getting Sqoop

- A Sample Import

- Generated Code

- Additional Serialization Systems

- Database Imports: A Deeper Look

- Controlling the Import

- Imports and Consistency

- Direct-mode Imports

- Working with Imported Data

- Imported Data and Hive

- Importing Large Objects

- Performing an Expo

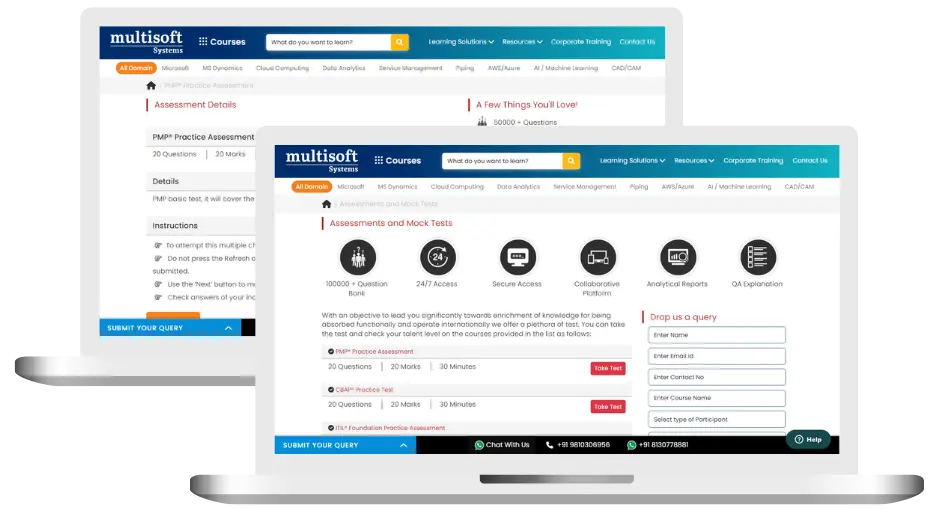

Big Data and Hadoop Training (MCQ) Assessment

This assessment tests understanding of course content through MCQ and short answers, analytical thinking, problem-solving abilities, and effective communication of ideas. Some Multisoft Assessment Features :

- User-friendly interface for easy navigation

- Secure login and authentication measures to protect data

- Automated scoring and grading to save time

- Time limits and countdown timers to manage duration.

Big Data and Hadoop Corporate Training

Employee training and development programs are essential to the success of businesses worldwide. With our best-in-class corporate trainings you can enhance employee productivity and increase efficiency of your organization. Created by global subject matter experts, we offer highest quality content that are tailored to match your company’s learning goals and budget.

Global Clients

Customized Training

Be it schedule, duration or course material, you can entirely customize the trainings depending on the learning requirements

Expert

Mentors

Be it schedule, duration or course material, you can entirely customize the trainings depending on the learning requirements

360º Learning Solution

Be it schedule, duration or course material, you can entirely customize the trainings depending on the learning requirements

Learning Assessment

Be it schedule, duration or course material, you can entirely customize the trainings depending on the learning requirements

Certification Training Achievements: Recognizing Professional Expertise

Multisoft Systems is the “one-top learning platform” for everyone. Get trained with certified industry experts and receive a globally-recognized training certificate. Some Multisoft Training Certificate Features :

- Globally recognized certificate

- Course ID & Course Name

- Certificate with Date of Issuance

- Name and Digital Signature of the Awardee

Big Data and Hadoop Training Trainer Profile

20+ Years Experienced

Our Big Data and Hadoop Corporate & Certification Program trainers bring 13+ years of proven industry expertise, delivering practical insights aligned with real project environments.

Trained 3150+ Professionals

Our expert trainers have successfully trained 3350+ professionals through structured, real-time training programs designed for industry readiness and career growth.

Certified Experts & Real-Time Project Learning

Build strong practical skills through live project-based training sessions led by certified industry experts with real-world experience.

Hands-on Learning Approach

Gain practical exposure through real-time scenarios, industry case studies, and hands-on assignments that simulate actual project challenges.

Certification Training Guidance

Receive expert support to prepare effectively, practice strategically, and confidently achieve globally recognized certification success.

Customized Training Delivery

Flexible training approach tailored to individual learning goals, skill levels, and evolving industry requirements for maximum effectiveness.

Big Data and Hadoop Training FAQ's

What Attendees are Saying

Our clients love working with us! They appreciate our expertise, excellent communication, and exceptional results. Trustworthy partners for business success.

Share Feedback 1K+ Reviews

1K+ Reviews

Download Curriculum

Download Curriculum